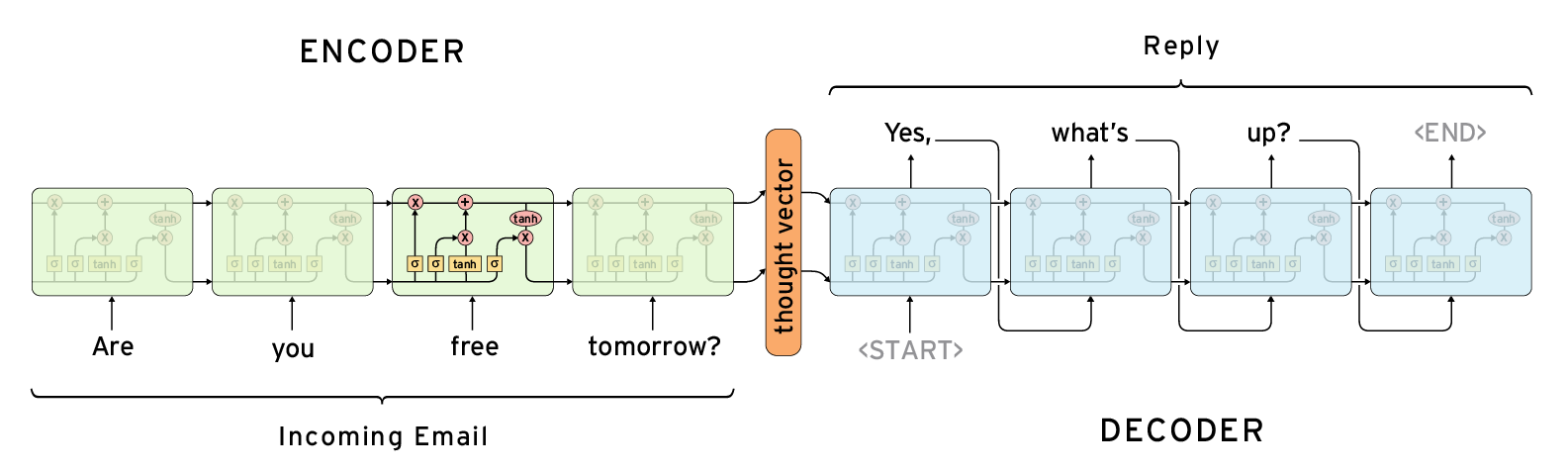

Seq2Seq Chatbot

This is a 200 lines implementation of Twitter/Cornell-Movie Chatbot, please read the following references before you read the code:

- Practical-Seq2Seq

- The Unreasonable Effectiveness of Recurrent Neural Networks

- Understanding LSTM Networks (optional)

Prerequisites

- Python 3.6

- TensorFlow >= 2.0

- TensorLayer >= 2.0

Model

Training

python3 main.py

Results

Query > happy birthday have a nice day

> thank you so much

> thank babe

> thank bro

> thanks so much

> thank babe i appreciate it

Query > donald trump won last nights presidential debate according to snap online polls

> i dont know what the fuck is that

> i think he was a racist

> he is not a racist

> he is a liar

> trump needs to be president